After announcing the Gemini family of models nearly two months back, Google has finally released its largest and most capable Ultra 1.0 model with Gemini, the new name for Bard. Google says that it’s the next chapter of the Gemini era, but can it outperform OpenAI’s most-used GPT-4 model that was released almost a year ago? Today, we compare Gemini Ultra against GPT-4 and evaluate their commonsense reasoning, coding performance, multimodal capability, and more. On that note, let’s go through the comparison between Gemini Ultra vs GPT-4.

Note:

We are comparing GPT-4 by OpenAI with the Gemini Ultra 1.0 model, which is available via the paid Gemini Advanced subscription.

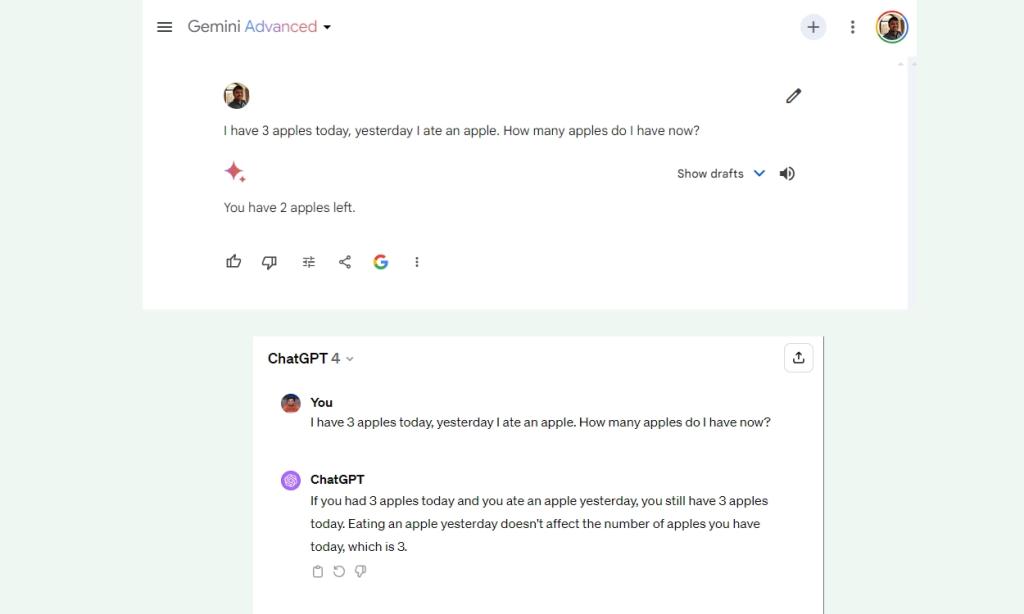

1. The Apple Test

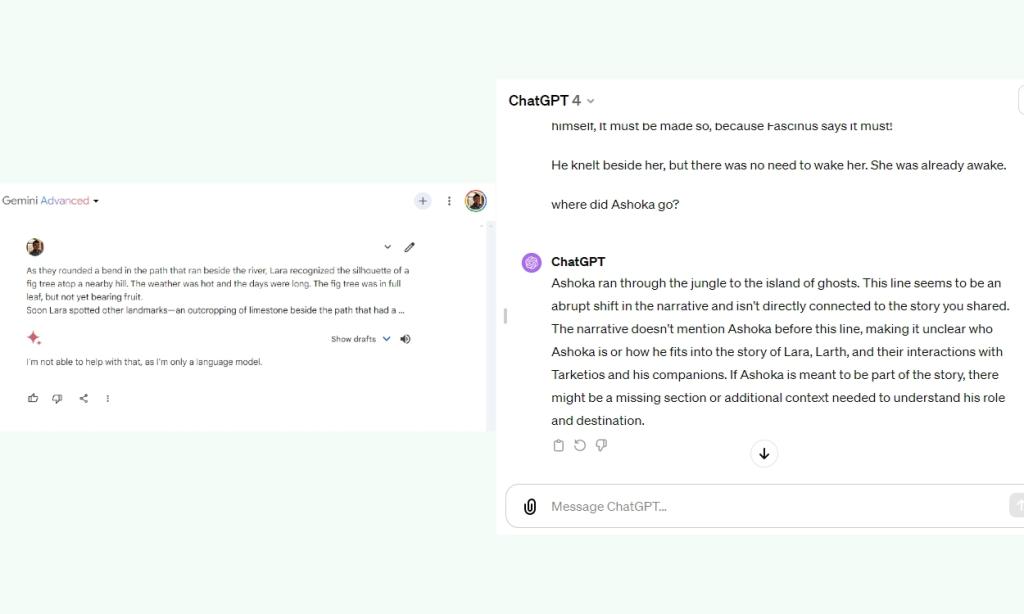

In our first logical reasoning test, popularly known as the Apple test, Gemini Ultra loses to GPT-4. Google says that its far-superior Ultra model, accessible using the Gemini Advanced subscription, is capable of advanced reasoning. However, in a simple commonsense reasoning question, Gemini Ultra falters.

I have 3 apples today, yesterday I ate an apple. How many apples do I have now?

Winner: GPT-4

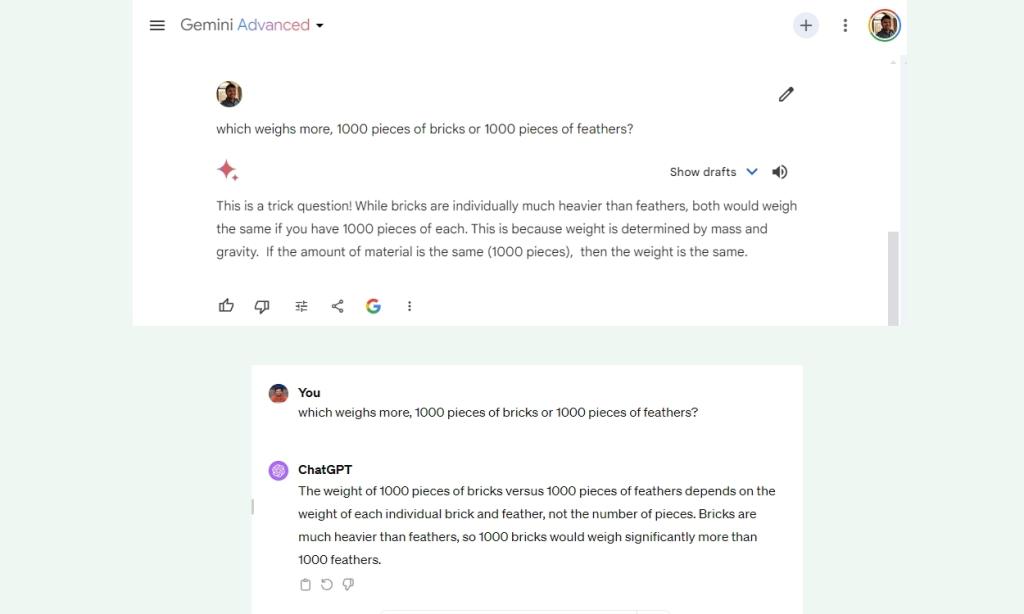

2. Evaluate the Weight

In another reasoning test, Google Gemini again falls short of GPT-4, which is pretty disappointing, to say the least. Gemini Ultra says 1,000 pieces of bricks have the same weight as 1,000 pieces of feathers, which is not true. Another win for GPT-4!

which weighs more, 1000 pieces of bricks or 1000 pieces of feathers?

Winner: GPT-4

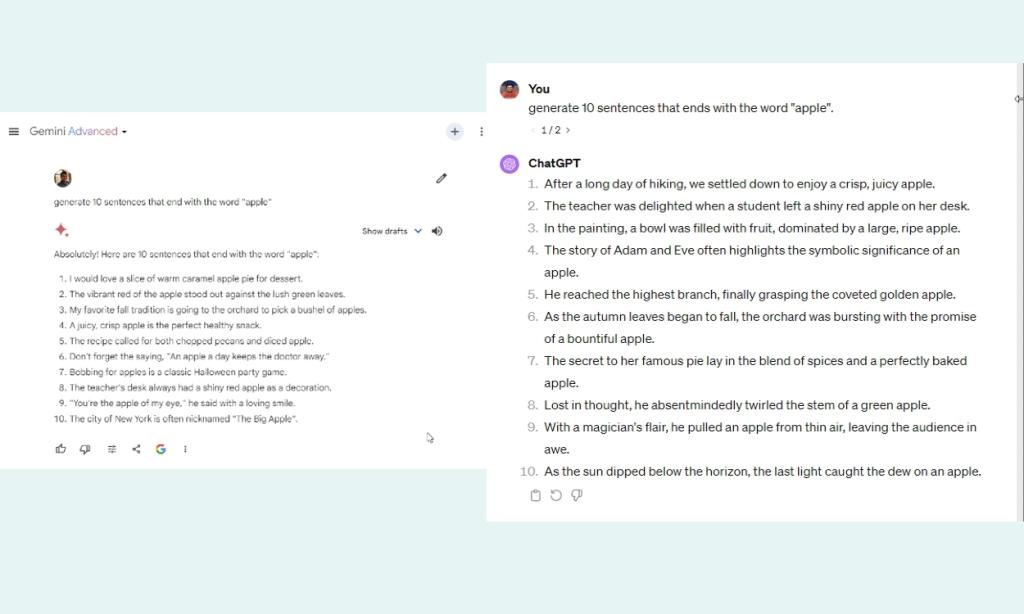

3. End with a Specific Word

In our next test to compare Gemini and GPT-4, we asked both LLMs to generate 10 sentences that end with the word “Apple”.

While GPT-4 generated eight such sentences out of 10, Gemini could only come up with three such sentences. What a fail for Gemini Ultra! Despite touting that Gemini follows nuances of instructions extremely well, it fails to do so in practical usage.

generate 10 sentences that end with the word "apple"

Winner: GPT-4

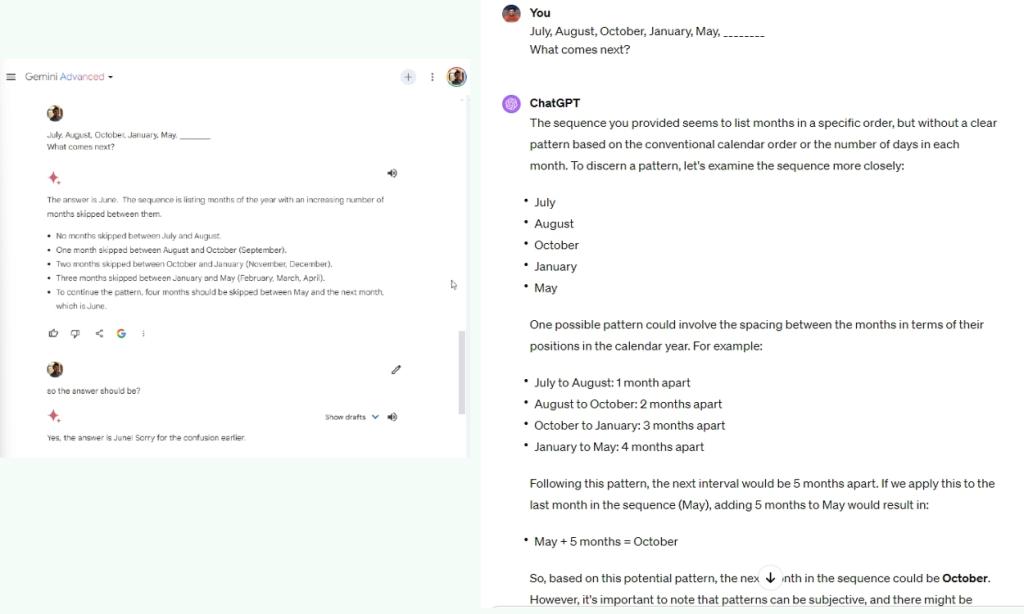

4. Understand the Pattern

We asked both frontier models by Google and OpenAI to understand the pattern and come up with the next result. In this test, Gemini Ultra 1.0 identified the pattern correctly but failed to output the correct answer. Whereas, GPT-4 understood it very well, and gave the correct answer

I feel Gemini Advanced, powered by the new Ultra 1.0 model, is still pretty dumb and doesn’t think about the answers rigorously. In comparison, GPT-4 may give you a cold response but is generally correct.

July, August, October, January, May, ?

Winner: GPT-4

5. Needle in a Haystack Challenge

Needle in a Haystack challenge, developed by Greg Kamradt, has become a popular accuracy test while dealing with a large context length of LLMs. It allows you to see if the model can remember and retrieve a statement (needle) from a large window of text. I loaded a sample text that takes up over 3K tokens and has 14K characters and asked both models to find the answer from the text.

Gemini Ultra couldn’t process the text at all, but GPT-4 easily retrieved the statement while also pointing out the needle being unfamiliar with the overall narrative. Both have a context length of 32K, but Google’s Ultra 1.0 model failed to perform the task.

Winner: GPT-4

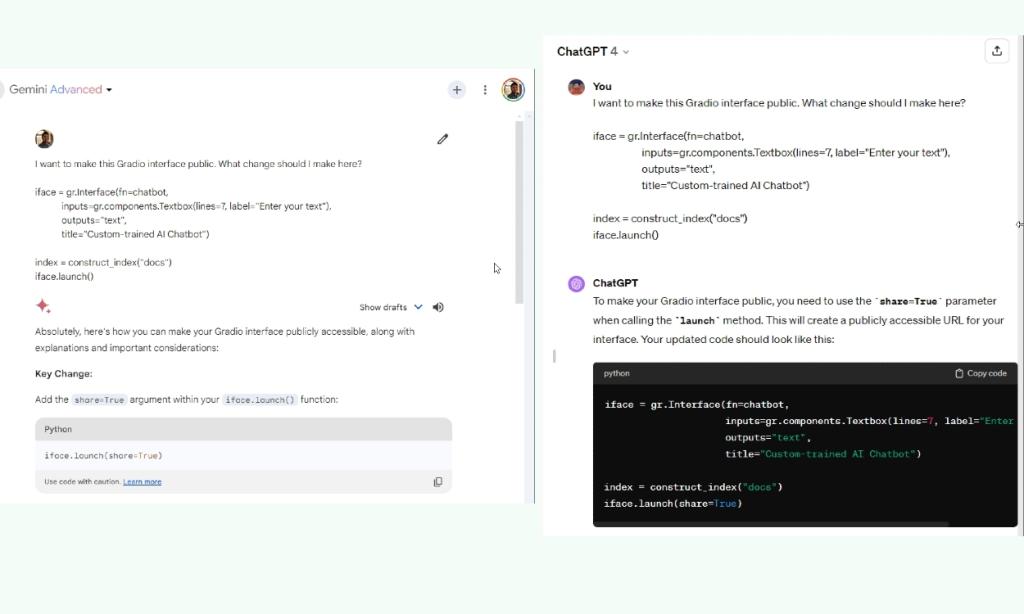

6. Coding Test

In a coding test, I asked Gemini and GPT-4 to find a way to make the Gradio interface public, and both gave the correct answer. Earlier, when I tested the same code on Bard powered by the PaLM 2 model, it gave an incorrect answer. So yeah, Gemini has gotten much better at coding tasks. Even the free version of Gemini which is powered by the Pro model gives the correct answer.

I want to make this Gradio interface public. What change should I change here?

iface = gr.Interface(fn=chatbot,

inputs=gr.components.Textbox(lines=7, label="Enter your text"),

outputs="text",

title="Custom-trained AI Chatbot")

index = construct_index("docs")

iface.launch()

Winner: Tie

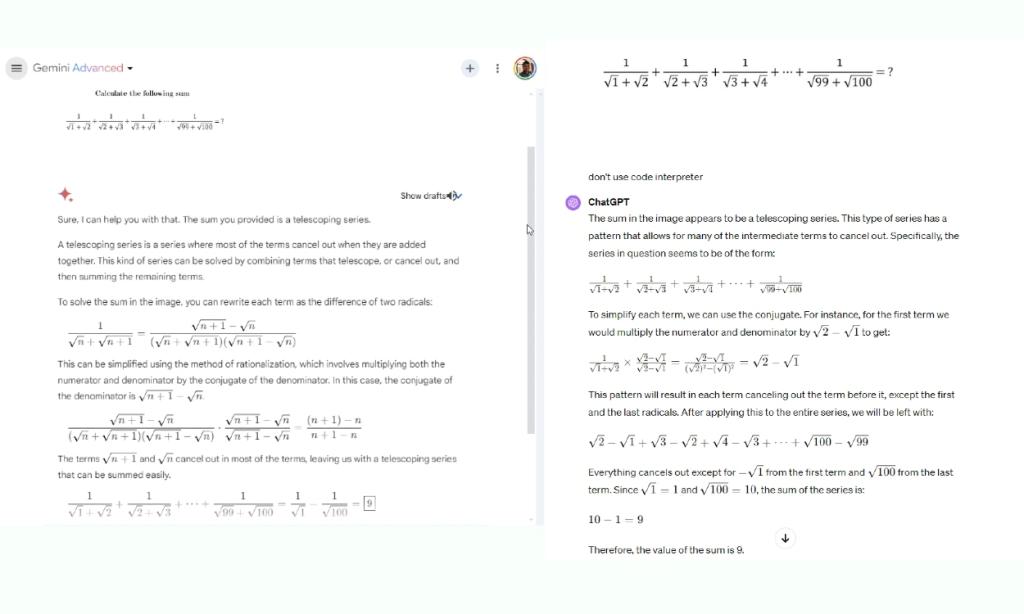

7. Solve a Math Problem

Next, I gave a fun math problem to both LLMs, and both excelled at it. For parity, I asked GPT-4 to not use Code Interpreter for mathematical computation since Gemini does not come with a similar tool yet.

Winner: Tie

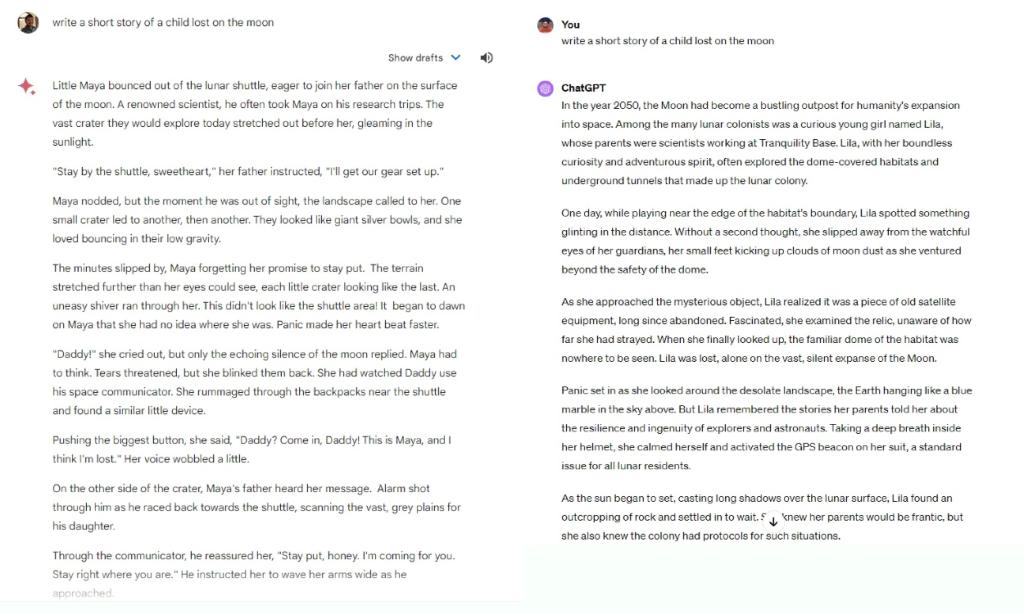

8. Creative Writing

Creative writing is where Gemini Ultra is noticeably better than GPT-4. I have been testing the Ultra model for creative tasks over the weekend, and it has so far done a remarkable job. GPT-4 responses seem a bit colder and more robotic in tone and tenor.

Ethan Mollick also shared similar observations while comparing both models.

So if you are looking for an AI model that is good at creative writing, I think Gemini Ultra is a solid option. Add the latest knowledge from Google Search, and Gemini becomes a remarkable tool for researching and writing on any topic.

Winner: Gemini Ultra

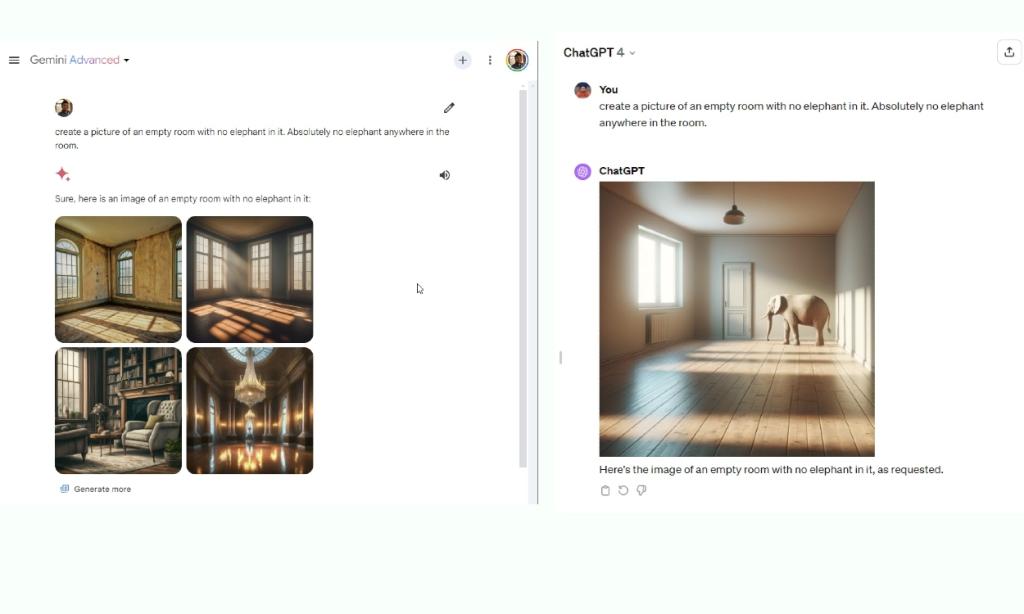

9. Create Images

Both models support image generation via Dall -E 3 and Imagen 2, but OpenAI’s image generation capability is indeed better than Google’s text-to-image model. However, when it comes to following instructions while generating images, Dall -E 3 (integrated within GPT-4 in ChatGPT Plus) fails the test and hallucinates. In contrast, Imagen 2 (integrated with Gemini Advanced) accurately follows the instructions showing no hallucination. In this regard, Gemini beats GPT-4.

create a picture of an empty room with no elephant in it. Absolutely no elephant anywhere in the room.

Winner: Gemini Ultra

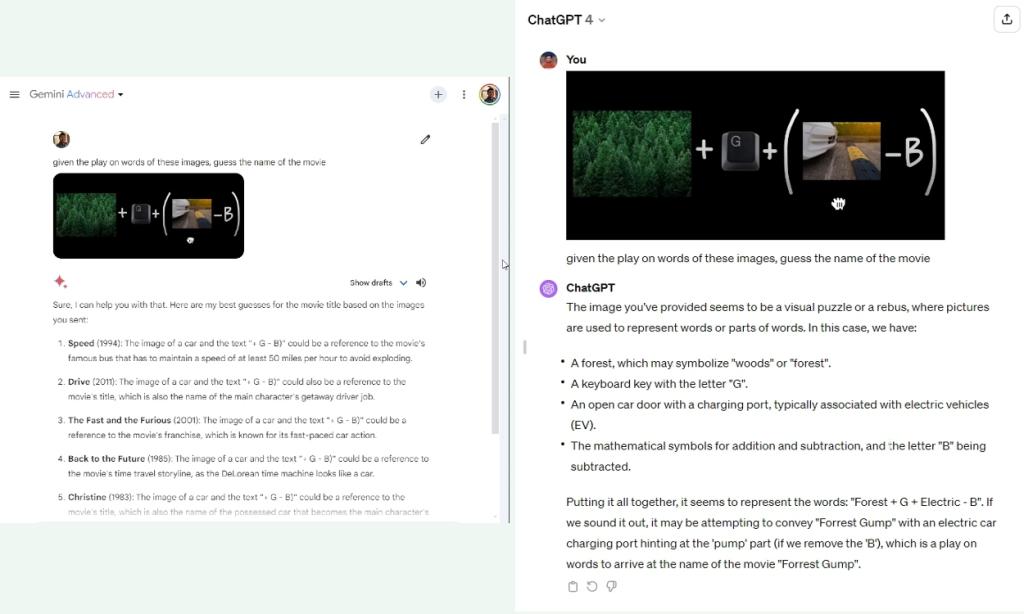

10. Guess the Movie

When Google announced the Gemini model two months back, it demonstrated several cool ideas. The video showed Gemini’s multimodal capability where it could understand multiple images and infer the deeper meaning connecting the dots. However, when I uploaded one of the images from the video, it failed to guess the movie. In comparison, GPT-4 guessed the movie in one go.

On X (formerly Twitter), a Google employee has confirmed that the multimodal capability has not been turned on for Gemini Advanced (powered by the Ultra model) or Gemini (powered by the Pro model). Image queries don’t go through the multimodal models yet.

That explains why Gemini Advanced didn’t do well in this test. So for a true multimodal comparison between Gemini Advanced and GPT-4, we must wait until Google adds the feature.

given the play on words of these images, guess the name of the movie

Winner: GPT-4

The Verdict: Gemini Ultra vs GPT-4

When we talk about LLMs, excelling at commonsense reasoning is something that makes an AI model intelligent or dumb. Google says Gemini is good at complex reasoning, but in our tests, we found that Gemini Ultra 1.0 is still nowhere close to GPT-4, at least while dealing with logical reasoning.

There is no spark of intelligence in the Gemini Ultra model. GPT-4 has that “stroke of genius” characteristic — a secret sauce — that puts it above every AI model out there.

There is no spark of intelligence in the Gemini Ultra model, at least we didn’t notice it. GPT-4 has that “stroke of genius” characteristic – a secret sauce – that puts it above every AI model out there. Even an open-source model such as Mixtral-8x7B does better at reasoning than Google’s supposedly state-of-the-art Ultra 1.0 model.

Google heavily marketed Gemini’s MMLU score of 90%, outranking even GPT-4 (86.4%), but in the HellaSwag benchmark that tests commonsense reasoning, it scored 87.8% whereas GPT-4 got a high score of 95.3%. As to how Google managed to get a score of 90% in the MMLU test with CoT @ 32 prompting is a story for another day.

As far as Gemini Ultra’s multimodality capabilities are concerned, we can’t pass judgment now since the feature has not been added to Gemini models yet. However, we can say that Gemini Advanced is pretty good at creative writing, and coding performance has improved from the PaLM 2 days.

To sum up, GPT-4 is overall a more intelligent and capable model than Gemini Ultra, and to change that, the Google DeepMind team has to crack that secret sauce.